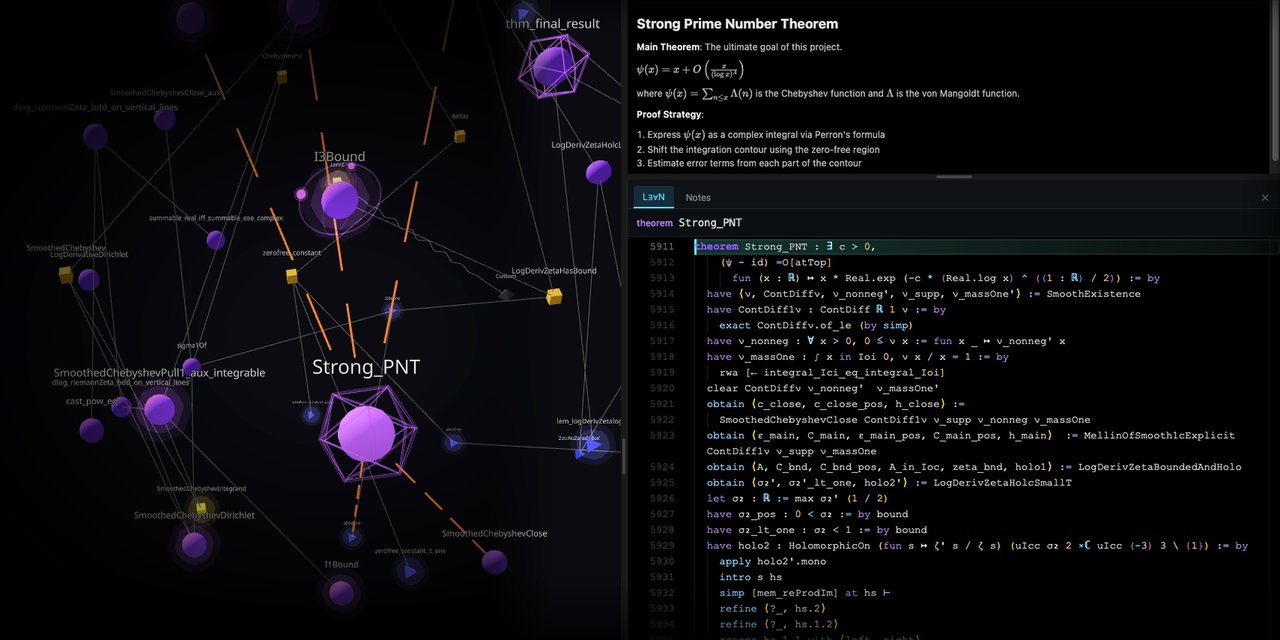

Visualization produced by Astrolabe.

From SMT Solvers to Lean and the Future of Automated Reasoning

by Nicola Gigante — 10 March 2026

Topics: Interview

We start the interview when it’s late afternoon in Italy but early morning in Seattle, where Leo de Moura lives.

Let’s start with some basic info about yourself. Where did you study?

I did all my studies in Brazil, a long time ago. The university I went to is called PUC in Rio de Janeiro.

And then, of course, you became widely recognized in the automated reasoning community. How did you approach the topic? What got you interested in it in the first place?

I started in static analysis. My thesis was on static analysis in compilers. From there, I moved to model checkers. SRI was my first job, and I started working on their model checker. They had a language called SAL, which still exists, for modeling protocols.

You can model protocols there and verify them using model checkers and static analysis. At the time, especially when I was doing my PhD, there were many papers showing the relationship between static analysis and model checkers.

To be honest, SRI is where I learned all about SMT solving, SAT solving, theorem provers, and so on. They develop PVS. Shostak’s method for combining decision procedures was created at SRI. Greg Nelson was developing the Nelson-Oppen approach at Stanford, and SRI people were working on Shostak’s method.

SRI has a deep heritage in this area, and that’s where I learned about SMT and switched to this field.

And then you moved to Microsoft Research?

Yes. My last project at SRI was an SMT solver called Yices. In 2006, Microsoft needed an SMT solver. They hired me to build one. So I moved there and started the Z3 project.

And you had been in charge of the project since the beginning?

Yes! It was a long time ago—it’s going to be twenty years in 2026.

And then you moved to AWS some years ago, right?

Yes, I moved to AWS roughly three years ago. Before that, I was at Microsoft for almost seventeen years. I worked on Z3 from 2006, when I joined, until 2013. In 2013, I started working on Lean.

I worked on Lean until I left Microsoft Research. I had only two projects at Microsoft: Z3 and Lean. It feels like it’s the opposite, but actually I worked seven years on Z3 and ten years on Lean before I moved to AWS.

So your career developed mostly in industry, first at Microsoft and then at Amazon. What is your opinion on the current relationship between industry and academia?

I think both places have strong ties to academia.

Both Microsoft Research and Amazon have great internship programs. They bring many interns every summer. We get students every year from all major universities. Both places hire PhD students, and they have programs where professors can come as visiting researchers for one month or more.

Amazon has a program called Amazon Scholar, where a professor can stay one day a week at Amazon or longer. Sometimes they take a sabbatical and stay full time.

We go to conferences, we publish papers. It feels tightly connected.

It also depends on the setting. At Microsoft, I was a researcher; at Amazon, an applied scientist. I never worked in the product teams. I guess it would be a completely different story if I had.

And besides the research divisions you worked in, do you think the intersection of these companies with academia is strong enough? Or would you improve something on both sides?

I think Byron Cook has done a great job educating the leadership at Amazon on the importance of automated reasoning and formal methods. We have the Amazon Research Awards program, where academics submit proposals and get funded—the funding can be around $100K. We constantly have people at Amazon going to universities to establish relationships.

These connections are crucial, especially now that everyone is so focused on AI. Interest in formal methods in academia is decreasing. In many American universities, everyone only wants to do AI. AI is important, but so is everything else.

At Amazon, we invest a lot of energy going to universities and making the case that formal methods and automated reasoning matter. Even if you care deeply about AI, formal methods are critically important. If you believe that in the future AI will write code, it’s more important than ever to have formal methods.

Are you aware of specific ways in which Amazon or Microsoft are using formal methods in their product divisions? Were you involved in some of them?

At Amazon, it’s everywhere. Policy languages, cryptography, AI. People verify systems in S3, EC2, and other services. The formal methods division is really big—more than 200 people, though I don’t have the exact number. They work in automated reasoning across different teams. It’s not just a single group. Byron started with a single group, but there are many spin-offs now: people working on products that contain automated reasoning, and people verifying real systems.

At Microsoft, automated reasoning had a golden age in security. The static driver verifier and Sage, for example, were hugely influential. But there has been a big shift toward AI in Microsoft Research, and the number of papers in automated reasoning has dropped significantly. These things go in cycles.

Let’s talk now about your invited talk at ETAPS. Can you tell us what it will be about?

Yes! It’s a tutorial about Lean. I want to show people how to get started—it’s going to be an introductory tutorial. For the talk in the industry track, I’m planning to talk about automated reasoning and AI: why it’s important, what’s happening in industry, not just at Amazon, but also at startups that are using automated reasoning together with AI.

I think automated reasoning has never been so relevant as it is right now. That’s one message I want to communicate to my colleagues in academia.

For example, one initiative I want to mention is expMath, the exponential math initiative from DARPA, which Patrick Shafto is pushing forward. In the past, nobody would have imagined that DARPA would invest in formal mathematics. This initiative is pushing the application of formal methods to mathematics, combined with AI.

I want to bring these initiatives to people’s attention. Many people are not aware of what these startups are doing in this area and the progress they’ve made.

In December 2025, Aristotle, an AI built by the startup Harmonic, solved a conjecture by Erdős that had been open for more than 30 years. Within days, Terence Tao, the greatest living mathematician, reviewed the result. Since the proof was formally verified, its correctness was not in question. Tao only needed to check that the formal statement precisely reflected the open conjecture.

Just three years ago, nobody would have imagined this was possible. People would have called it science fiction, but now it’s happening. And this is just the beginning.

There’s another example that’s mind-blowing. Take ByteDance, the company behind TikTok. Could you imagine that ByteDance cares about formal methods? They built an AI that creates formal proofs, and managed to get a gold medal in the International Mathematical Olympiad. This is a for-profit company, but they see the potential of combining AI with formal methods. They’re investing heavily, hiring students from CMU to work on these projects.

You mentioned Terence Tao. Recently he’s all in on using Lean for verifying proofs, including those created by AI. Are you in touch with him directly?

Yes, we’ve talked a couple of times. He’s a very busy person, and I’m grateful for the support he has given to Lean. He truly believes in the combination of formal methods and AI, and that it will change how we do mathematics in the future.

For example, he went on the Lex Fridman podcast, one of the most popular podcasts in the US, and spent almost an hour talking about automated reasoning, formal methods, and how they’re going to change mathematics together with AI. I’ll definitely mention that during my talk.

Lean is gaining a lot of traction in the automated reasoning community. Is there something in the project that worked really well, better than other proof assistants that were available in the past?

Build. Build again. Build again. Make mistakes. Learn from your mistakes. Try again. Keep improving. Listen to your users.

We listen to our users. I don’t want to name specific projects—I’m not here to bash anybody—but listening to your users is really important: what they care about, what’s important to them.

The first ingredient is listening. The second is scalability. Newcomers sometimes think they can do everything in any system. In principle, maybe. But in practice, scalability matters enormously. We spent a lot of time optimizing, finding new algorithms, and making sure Lean performs well on the problems users actually face.

The third ingredient is extensibility. Lean is highly extensible, and this was important because when I was at Microsoft, I did not have resources. It was just me working alone there. One trick I used was to make Lean so extensible that users could change it and make it their own.

And the math community did exactly that. Mathlib has more than 2 million lines of definitions, theorems, and proofs, and 50,000 of those lines are Lean extensions implemented by the community.

They also built many linters, which were very important for scaling the project—not in terms of performance, but in terms of the number of people contributing. You can manually check that everyone follows coding conventions, but you really scale by having tools that check consistency automatically.

Those are the three main reasons: listening to users, making sure they’re not blocked by scalability issues, and making the system extensible.

One thing I noticed comparing Lean with other proof assistants is that Lean is self-hosted—Lean is written in Lean. This is quite an achievement for any programming language. Did you decide from the beginning that this was a goal for the project?

No, not at the beginning.

We had three major versions of Lean. The first was just a prototype to learn the basics of type theory. Then we built Lean 2, the version we published at CADE in 2015. It did not have a good tactic framework, but it was usable. We had maybe thirty users of Lean 2.

Then we realized we needed a real tactic framework, so I added an interpreter to Lean that lets you write your own tactics in Lean itself. You could also implement extensions and linters in Lean, and this became very popular.

Then, I decided to push extensibility to the limits because it was popular with our users. I also felt like we started enjoying programming in Lean. It was much more pleasant than writing in C and C++. So I said “ok, let’s do it in Lean.”

People thought I was crazy. Nobody believed it could be done. Sebastian Ullrich and I worked for three years on it. At the end of 2020, we managed to bootstrap Lean using Lean. It was one of the happiest days of the project.

The strategy was to make the language as small as possible. You need to compile the compiler using itself, so it has to be minimal. We started removing all the nonessential features, then kept pushing forward—moving the boundary of how much you can compile without failing, until one day you do it and it works.

So I think you have strong memories of that moment when you compiled Lean using Lean.

Oh, yes. And the next big thing was to move Mathlib to Lean 4.

When we started Lean 4 in 2018, we decided to also fix the language. There were things we wanted to fix in the syntax and semantics, so Lean 4 was not going to be backward compatible. At the time, Mathlib was only 40,000 lines of code. It wasn’t hard to imagine rewriting it from scratch in the worst case.

But it took us three years to write Lean 4. By the time we finished, Lean 3 had gained a lot of popularity. There were more than 1,000,000 lines of Mathlib at that point. Porting it was the next battle. People said Lean was going to be like Python, where the transition from Python 2 to Python 3 split the ecosystem for years.

But people underestimated the power of the community. Lots of people showed up. It was a huge community effort, and they managed to complete the port in 2023.

Thank you very much for your answers, Leo. Is there anything else you want to add?

One thing, yes. I would like to mention the Lean FRO, a non-profit organization focused on developing Lean. Sebastian Ullrich and I launched it in August 2023.

We now have engineers working full time on Lean. We have great documentation. Every month we release a new version of Lean with tons of new features. Progress has accelerated dramatically since 2023. Before the Lean FRO, Lean was a research project; now we view it as a product. We aim for very high quality, not just as a theorem prover but also as a programming language.

The Lean FRO is very important to us. We are always working to keep it funded, and spreading the word is essential. If you want to learn more about Lean or get involved, visit lean-lang.org/fro.

Nice, thank you again very much, Leo.

Thank you too, have a nice day!